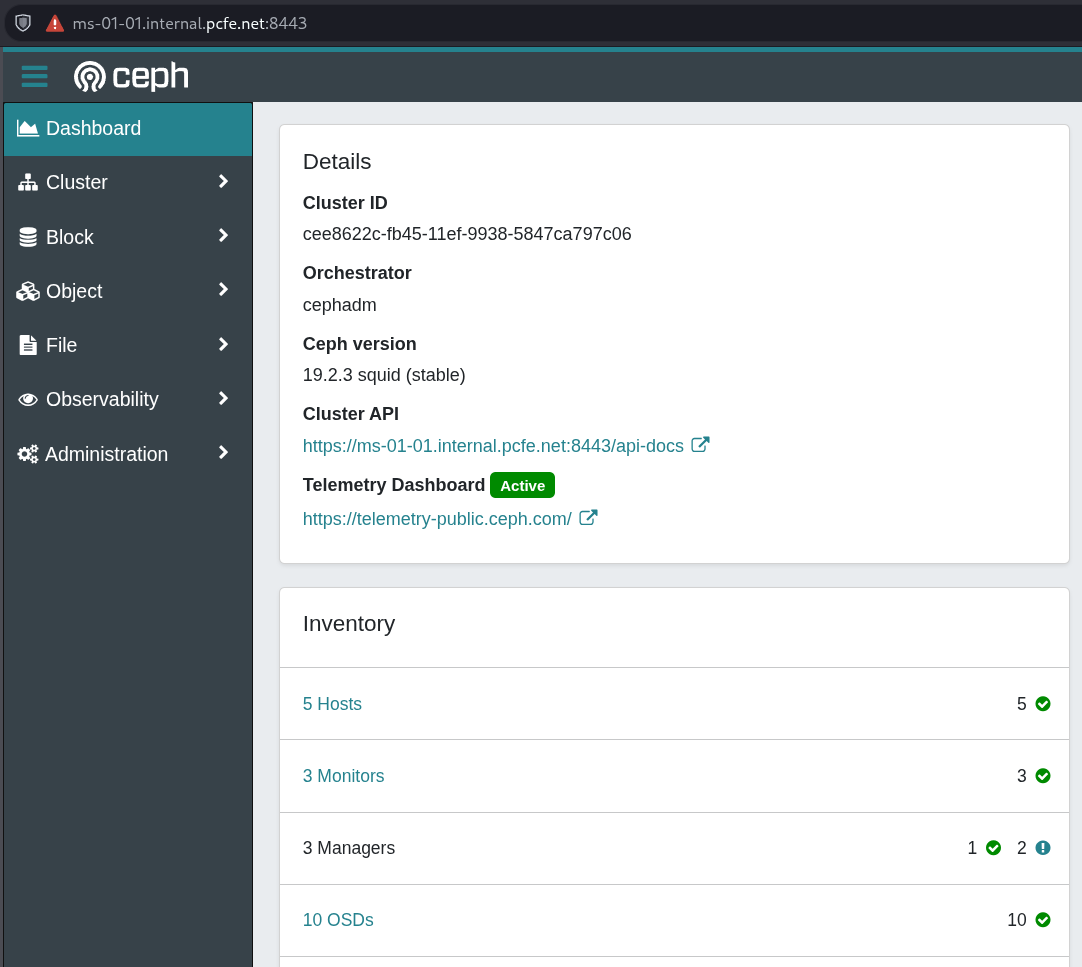

Minisforum MS-01, CentOS Stream 9, Ceph Squid install

doh!, install of my Ceph Squid 19 itself was in early March, but I forgot to publish the braindump I’d written to this blog.

It was really just following the official Using cephadm to Deploy a New Ceph Cluster for the latest version (squid) at the time of install.